Claude Opus 4.7 Dethrones GPT-5.4 on Accounting AI Benchmark

Santiago is the co-founder of DualEntry. He previously co-founded Benitago, a digital consumer products group that raised $380 million in funding, grew to over 300 team members, and achieved $100M ARR over 8 years before its acquisition in 2024. Santiago has been featured in The Tim Ferriss Show, Forbes, The Wall Street Journal, and more. Originally from Venezuela, Santiago studied Computer Science at Dartmouth before leaving to launch Benitago. At DualEntry, Santiago writes about the future of AI in accounting, ERP modernization, and how finance teams can leverage technology to scale.

Anthropic released Claude Opus 4.7 today. Within hours, we ran it through the 2026 Accounting AI Benchmark - and it dethroned OpenAI’s GPT-5.4 to take the #1 spot on real-world accounting tasks. Here’s what the data shows.

Opus 4.7 already made headlines today for dethroning GPT-5.4 on coding benchmarks, scoring 64.3% on SWE-bench Pro versus GPT-5.4’s 57.7%. We wanted to see if the same held true for real-world accounting work.

It did. Opus 4.7 scored 79.2% overall accuracy across end-to-end accounting tasks, knocking OpenAI’s GPT-5.4 (77.3%) off the top spot it held since our last update. GPT-5.4-Nano (75.2%) and GPT-5.4-Mini (74.3%) round out the top four, but for the first time, OpenAI doesn’t own the #1 position. The full picture, though, is more nuanced than the headline suggests.

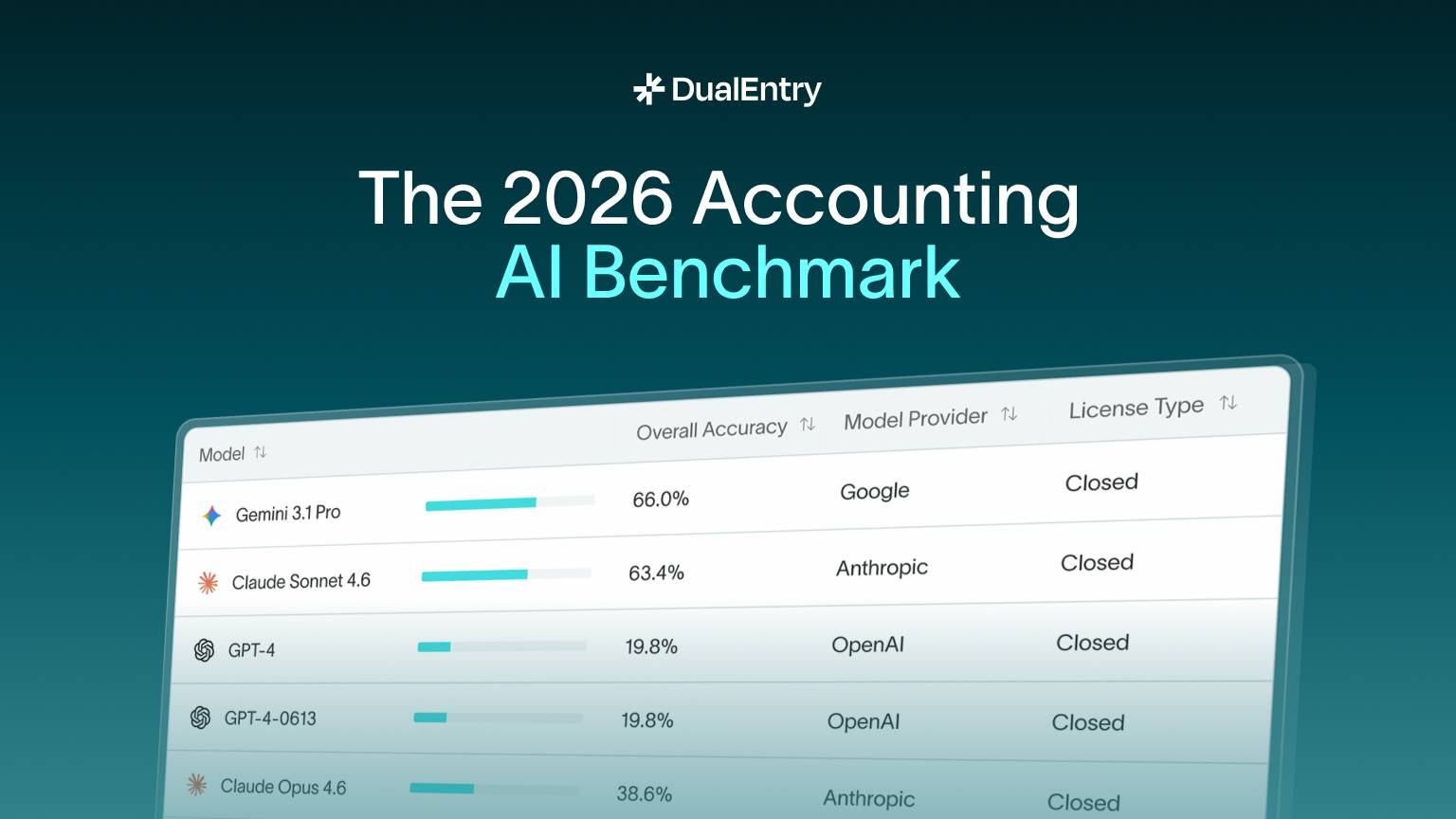

The full leaderboard

We tested 10 models from five providers - Anthropic, OpenAI, Google, MiniMax, and Zhipu AI - across four categories of accounting tasks: transaction classification, journal entries, month-end close, and financial reporting.

Opus 4.7 beat GPT-5.4 - but both models share the same blind spot

The overall accuracy gap between Opus 4.7 and GPT-5.4 is less than 2 percentage points. Where the data gets interesting is in the task-level breakdown.

Structured tasks: Opus 4.7 hit 92% accuracy on both transaction classification and journal entries. These are well-defined, rules-based tasks where AI can pattern-match effectively, categorizing expenses, generating debit/credit entries from descriptions, and mapping transactions to the right accounts.

Complex workflows: Performance dropped sharply on month-end close (50%) and financial reporting (62%). These tasks require multi-step reasoning, cross-referencing across accounts, applying judgment calls on accruals and adjustments, and producing coherent narrative outputs. Every model on the benchmark struggled here.

This isn’t unique to Opus 4.7. Every model we tested showed the same pattern: strong on structured, repetitive tasks; weak on the complex, judgment-heavy work that accountants actually get paid for.

Open-weight models are surprisingly competitive

Z.ai’s GLM-5 (72.3%) and MiniMax M2.7 (71.3%) both outperformed Google’s Gemini 3.1 Pro (66.0%) and Anthropic’s own smaller models - Claude Sonnet 4.6 (63.4%) and Claude Haiku 4.5 (61.4%).

For accounting firms evaluating AI tools, this means the best model for their use case isn’t automatically the most expensive one.

GPT dethroned, but no model has broken 80%

Opus 4.7 took the crown from GPT-5.4, but it’s worth noting how close the race is at the top. The gap between #1 and #2 is under 2 points. And no model has yet crossed the 80% accuracy threshold. That matters because real-world accounting requires near-perfect accuracy, a model that gets 1 in 5 entries wrong isn’t ready to operate without human oversight.

The lead could easily change with the next model update from either side. What won’t change anytime soon is the gap between structured tasks (90%+) and complex workflows (50–62%). That’s the real benchmark to watch.

About the benchmark

The 2026 Accounting AI Benchmark tests models on real accounting tasks, not synthetic reasoning problems. We evaluate accuracy across transaction classification, journal entries, month-end close procedures, and financial reporting - the core workflows that accounting teams perform daily.

We update the leaderboard as new models are released. Opus 4.7 was benchmarked within hours of its public release today.

Explore the Full Benchmark & Leaderboard →

Interactive results with filtering by model, provider, and task type